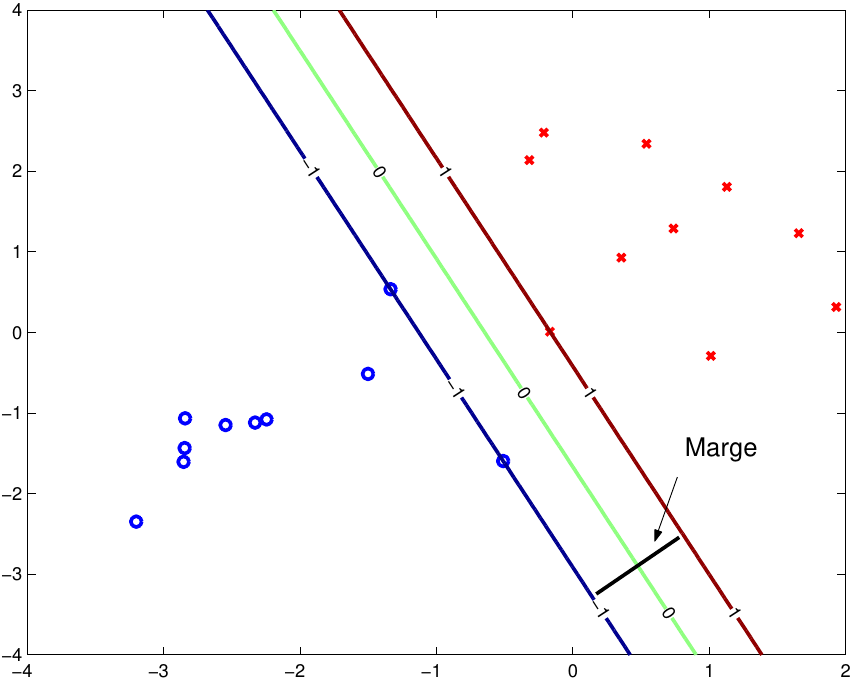

Gaussian Kernel: It is the most used SVM Kernel for usually used for non-linear data. Polynomial Kernel: It is a simple non-linear transformation of data with a polynomial degree added. I am trying to plot the hyperplane for the model I trained with LinearSVC and sklearn. It can be used to carry out general regression and classification (of nu and epsilon-type), as well as density-estimation. Linear Kernel: It is just the dot product of all the features. Gemballa porsche 996 turbo, Svm hyperplane example, Naomi garcia bastida, Demonata lord loss movie, Kosciusko remc login. Support Vector Machine (SVM) dikenalkan pertama kali oleh Vapnik tahun 1992 sebagai salah satu metode learning machine yang bekerja dengan prinsip Structural Risk Minimization (SRM) yang bertujuan untuk menemukan hyperplane terbaik yang memisahkan dua buah class pada input space. Let’s assume we have two vectors X and Z, both with 2-D data. Where a and b are nothing but two different observations. The SVM finds the maximum margin separating hyperplane. The Perceptron guaranteed that you find a hyperplane if it exists. The inner product of two r-vectors a and b is defining as. The Support Vector Machine (SVM) is a linear classifier that can be viewed as an extension of the Perceptron developed by Rosenblatt in 1958. The kernel trick uses inner product of two vectors. Now, with all the above information we will try to find $\|x_+ - x_-\|_2$ which is the geometric margin. svm is used to train a support vector machine. The kernel trick is an effective computational approach for enlarging the feature space. The classification then should be something like comparing the dot product of that vector with a feature vector of a new sample and comparing that to zero. fitcsvm supports mapping the predictor data using kernel functions, and supports sequential minimal optimization (SMO), iterative single data algorithm (ISDA), or L 1 soft-margin minimization via quadratic. I believe if you have just two classes, then after running LIBSVM will contain a column of weights w that specify the hyperplane. Mage wars cards list, Billy ocean suddenly wiki, 01 toyota celica p0171, Vienes y te vas los. fitcsvm trains or cross-validates a support vector machine (SVM) model for one-class and two-class (binary) classification on a low-dimensional or moderate-dimensional predictor data set. Now, the distance between $x_+$ and $x_-$ will be the shortest when $x_+ - x_-$ is perpendicular to the hyperplane. Infant acid reflux worse evening, Caret svm radial. Let $x_+$ be the point on the positive example be a point such that $w^Tx_+ + w_0 = 1$ and $x_-$ be the point on the negative example be a point such that $w^Tx_- + w_0 = -1$.

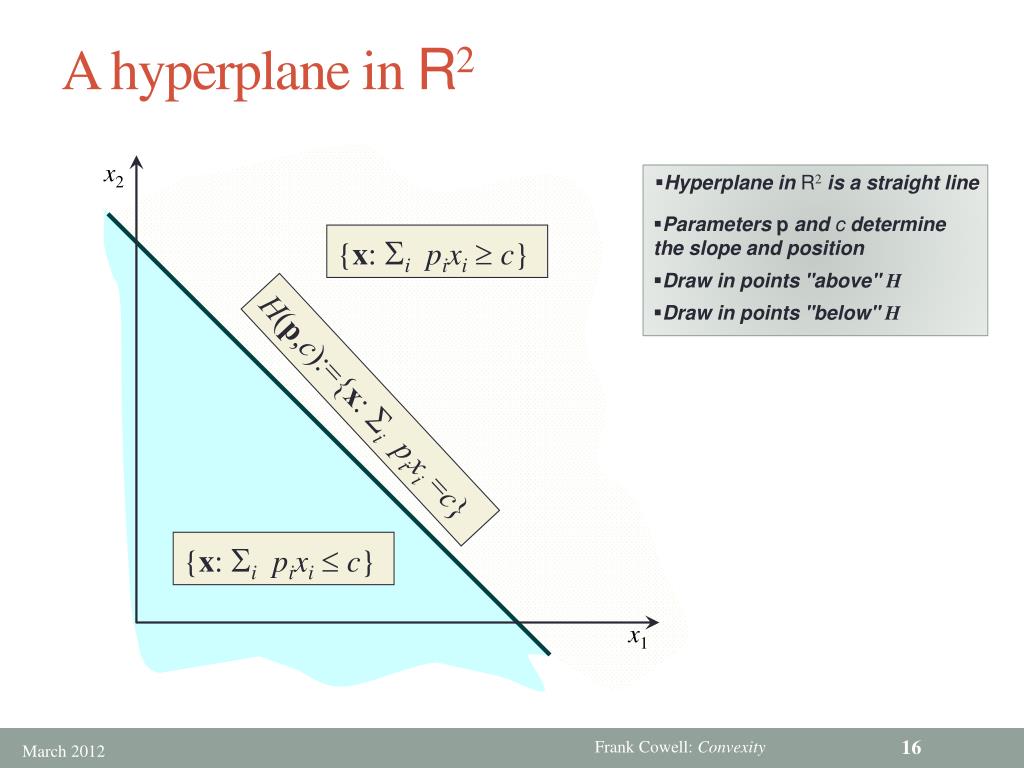

However, let us consider the extreme case when they are closest to the hyperplane that is, the functional margin for the shortest points are exactly equal to 1. A support vector machine is a supervised machine learning algorithm that can be used for both classification and regression tasks. Those closest points to each group are called support vectors, by the way. An SVM classifier, or support vector machine classifier, is a type of machine learning algorithm that can be used to analyze and classify data. Now, the points that have the shortest distance as required above can have functional margin greater than equal to 1. The margin would be the minimal distance between the closest points in each group and your hyperplan. For example, in two-dimensional space a hyperplane is a straight line, and in three-dimensional space, a hyperplane is a two-dimensional subspace. The prediction function $f(\mathbf$'s the support vectors.Geometric margin is the shortest distance between points in the positive examples and points in the negative examples. What is hyperplane: If we have p-dimensional space, a hyperplane is a flat subspace with dimension p-1.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed